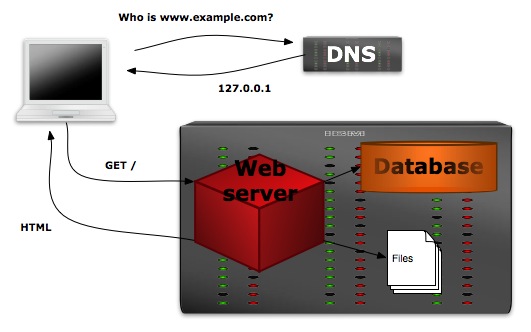

One of the requirements in the migration of our web sites to Drupal is that we create a robust and redundant platform that can stay running or degrade gracefully when hardware or software problems inevitably arise. While our sites get heavy use from our communities and the public, our traffic numbers are no where near those of a top-1000 site and could comfortably run off of one machine that ran both the database and web-server.

Single Machine Configuration

This simple configuration however has the major weakness that any hiccups in the hardware or software of the machine will likely take the site offline until the issues can be addressed. In order to give our site a better chance at staying up as failures occur, we separate some of the functional pieces of the site onto discrete machines and then ensure that each function is redundant or fail-safe. This post and the next will detail a few of the techniques we have used to build a robust site.

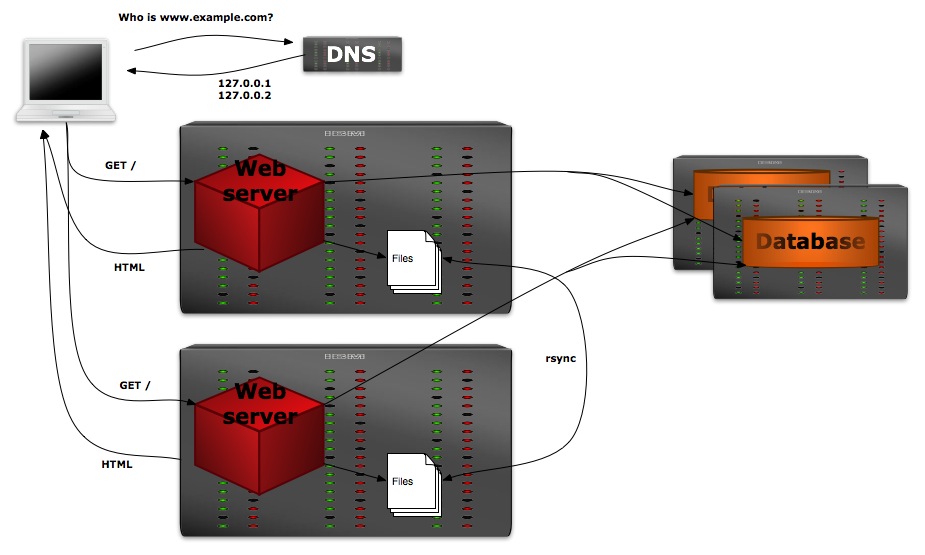

Pull out the database, use multiple web-servers

The two main components of Drupal (and most similar web applications) are the webserver, which handles PHP execution and file-serving; and the MySQL database, which stores all data with the exception of uploaded files. By putting the database on a separate machine we can can have multiple machines acting as front-end web-servers, both of them reading and writing to the same database. In this way, it doesn’t matter which web-server handles a given request as they will both get the same information out of the database. With two or more web-servers, our platform gains some redundancy since one web-server can fail while the second keeps handling requests.

With both web-servers point at the same database server, the database server still remains a single point of failure. Database clustering can alleviate this problem, but will be the subject of a future post.

Multiple web-server challenges

This redundancy does come at a cost in complexity however, since we need to ensure that any uploaded files are available on both web-servers. There seem to be two primary ways of tackling this problem (without resorting to costly and complex distributed file-system tools). The first is use rsync to copy files between the web-servers every few minutes.

Two web servers with rsync

While this is reasonably simple to set up between two web-servers, it comes with significant downsides:

- Files cannot be deleted in the sync as newly-added files will exist on only one web-server. Since the sync is two-way, there is no way for the rsync processes to tell the difference between a new file and a deleted file.

- Requests that come to the “other” web-server will not be able to access new files until the sync happens.

- If additional web-servers are added, the sync process needs to be updated on every existing web-server to include the new web-server

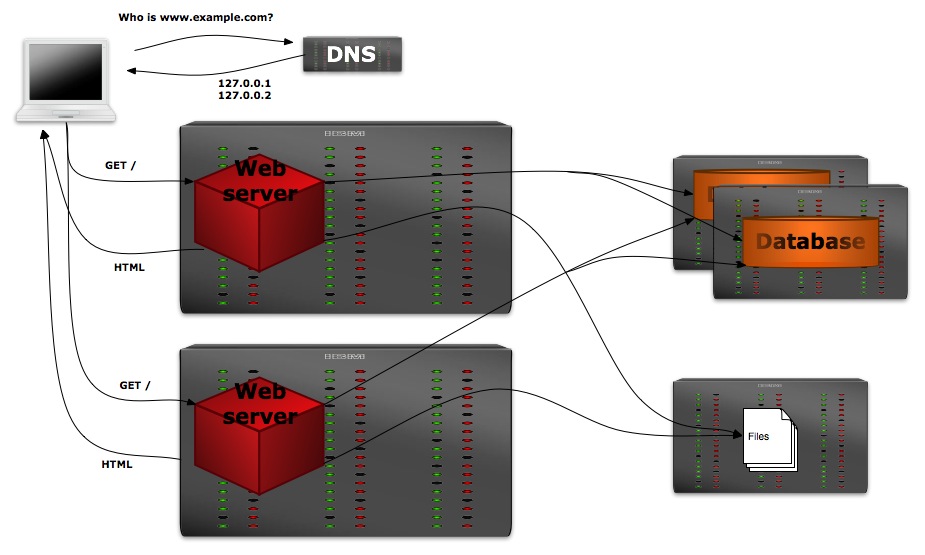

The other alternative is to store uploaded files on a separate file-server, whose upload directory is mounted on each web-server using NFS. This method eliminates the synchronization problems, since all web-servers are essentially writing to the same directory.

Two web servers with NFS

On top of the complexity of adding a fourth machine (the file-server) to our mix, this method also leaves us with the file-server as a single point of failure — were it to go down, no uploaded files would be accessible.

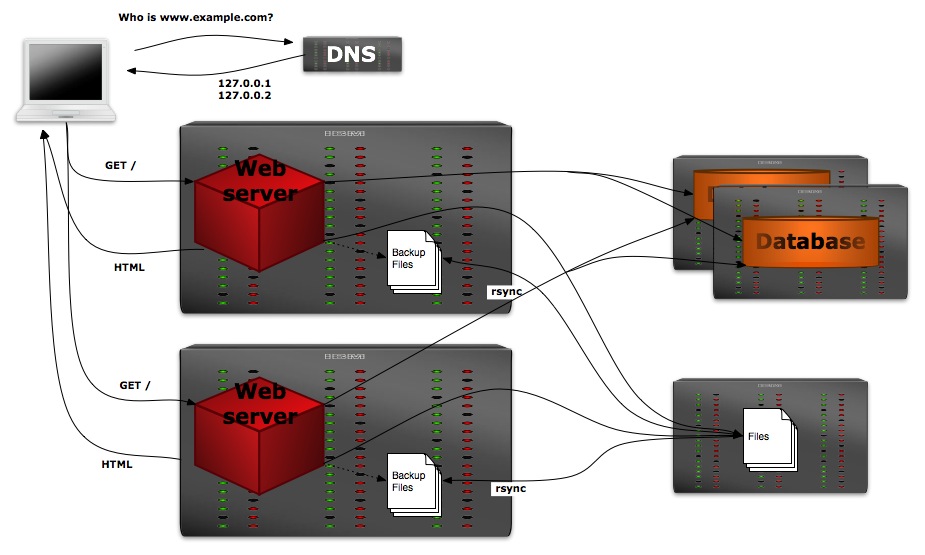

Best of both worlds

In order to better solve this problem, the approach we took is to go the NFS route, but augment it with a backup copy of the files stored on the local file-system of each web-server. Every ten minutes or so a script (sync_files.sh) runs that checks to see if the shared NFS directory is available, and if so syncs the uploaded-files to a backup location on the web-server’s file-system. This backup copy has its permissions set so that the Apache process cannot write to it, preventing synchronization problems if the shared NFS directory goes offline and we need to serve files out of the backup copy.

Two web servers with NFS and local backup copies.

A second script (check_link.sh) runs every minute and checks to see if the shared NFS directory is available. If it is offline, this script changes the symbolic link of our “files” directory so that Drupal will now use the read-only backup copy for its files. If the NFS directory comes back online, this script will again update the symbolic link to point at our writable shared NFS directory.

An important consideration in this setup is that the NFS share is mounted in ‘soft’ mode so that file-access errors will time out quickly and allow for a timely switch-over to our backup files.

files.example.edu:/images /mnt/files nfs soft 0 0

An example 'soft' mount line in /etc/fstab

If the default ‘hard’ NFS mount is used, the check_link processes will hang indefinitely while trying to communicate with the file-server and never switch to our backup files.

Here is an example layout on the web-server to accomplish this setup:

# The scripts that will be run by cron: /usr/local/bin/check_link.sh # Run every minute /usr/local/bin/sync_files.sh # Run every 10 minutes # The mounted NFS share: /mnt/files/ # The backup copy of files: /srv/files_read_only/ # The 'files' symbolic link, pointing normally at the NFS share: /srv/files/ => /mnt/files/ # On NFS failure, this link will be switched to the backup directory: /srv/files/ => /srv/files_read_only/ # The Drupal code directory: /srv/drupal/ # The files directory for a site is a link into the switched files link /srv/drupal/sites/www.example.com/files/ => /srv/files/www.example.com/files/

By mounting the shared NFS directory, keeping a read-only local copy of the files, and monitoring the state of the NFS directory we gain the following benefits:

- No problems with synchronization as all web-servers share the same remote filesystem.

- Synchronization of the local backup copies is not a problem as this is always a one-way sync rather than a two-way sync between different web-servers.

- While the NFS file-server is still a single point of failure, read access to the uploaded files (via the backup copy) will be restored after a maximum of one minute plus the NFS time-out (2 minutes by default for ‘soft’ mounts).

- The web-servers don’t need to know about each other, easing configuration if additional web-servers are added.

This configuration adds an extra machine to the platform mix and a bit of complexity, but it makes normal operation robust (instant file availability to all web-servers) and allows for graceful degradation (file-access becomes read-only) if the file-server goes down.

Many thanks to our system administrator, Mark Pyfrom, for all of his help in developing and testing this platform.

* Update on 2009-09-10: added note about ‘soft’ NFS mounts and an example file-system layout.

Nice article and illustrations. However, one point I did not see you address was how to handle and manage the DNS requests for load balancing? Are you assuming a round robin approach? Would like to hear your thoughts on this.

Thx,

JJ

Hi JJ,

We are currently just using round-robin DNS where the the DNS responses include all of the IP addresses of the web servers, but rotate which one is listed first.

If the host listed first is down, clients will generally try the next host in the list after a timeout period. While this can cause a large decrease in response time when a host goes down, it does provide fail-over abilities without having to add a dedicated load-balancer in front of the web servers.

I’ll post an update if we ever change this set-up, but I doubt that we will ever need to scale to the point where uneven webserver load becomes our pain-point.

– Adam